Multitemporal land cover classification of remote sensing data for national surveying tasks

| Team: | M. Voelsen, F. Rottensteiner, C. Heipke |

| Year: | 2019 |

| Funding: | Landesamt für Geoinformation und Landesvermessung Niedersachsen (LGLN) |

| Duration: | 2019 - 2025 |

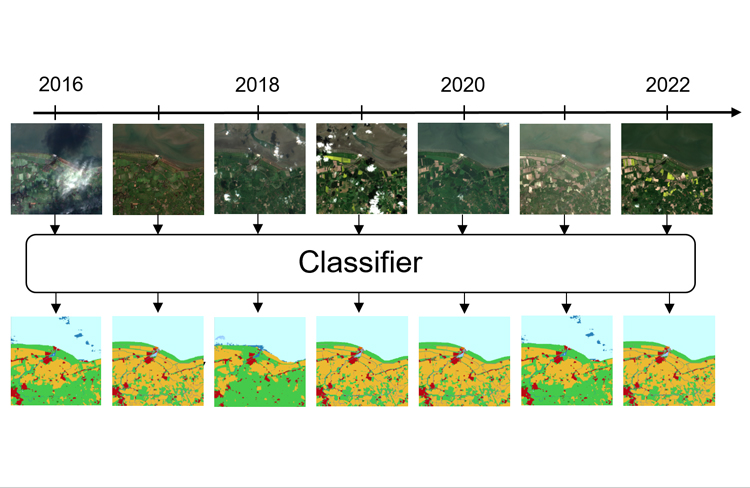

With the availability of large amounts of satellite image time series (SITS), the identification of different materials of the Earth’s surface is possible with a high temporal resolution. One of the basic tasks is the pixel-wise classification of land cover, i.e. the task of identifying the physical material of the Earth’s surface in an image. State-of-the-art methods to solve this tasks in a supervised manner are based on deep learning approaches, like fully convolutional neural networks or transformer.

The main objective of this project is the utilization of Sentinel image data for the automatic update and enrichment of geospatial databases of the surveying administration of Lower Saxony (LGLN), especially with regard to land cover and land use. While this is currently done using images from aerial flights, which are acquired every three years, this update is now based on the pixel-wise classification of the available satellite image time series that are acquired every 5-6 days for the Sentinel-2 satellite. The Sentinel data are continuously collected by the European Space Agency (ESA) within the framework of the Copernicus program for several years now and are made available free of charge.

For the pixel-wise classification fully convolutional neural networks (FCNs) are used to extract spatial features from the images. In addition, the temporal dimension is involved in the classification process, which means that a timeseries serves as input to the classifier, which also provides an output for each input timestep. For the extraction of temporal features transformer models are also a suitable kind of model as they can extract dependencies between input sequences of arbitrary length, that are independent of the distance of the images in the sequence. For these reasons, transfomer models are used in combination with the FCN models to extract both, spatial and temporal features. The training data needed for this method are obtained from existing geodata of the LGLN that is combined to temporally suitable satellite data.