Deep Domain Adaptation for the Classification of Aerial Images

| Team: | D. Wittich, F. Rottensteiner |

| Year: | 2018 |

| Duration: | Since 2018 |

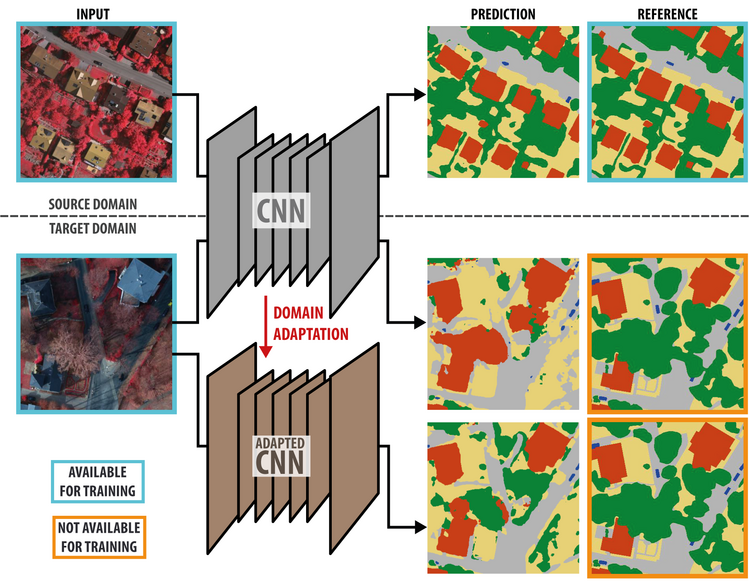

Remote sensing imagery of the earth surface, for example taken from an airplane, plays a crucial role for the generation of maps. Intermediate products such as orthophotos and digital surface models are well suited to automate this task. However, due to the high variation of the appearance of objects, model-based approaches quickly reach their limits in most applications. Machine learning techniques, e.g. for pixel-wise classification, can be adapted to new data more easily by the inherent training procedure. In particular, deep learning has shown to surpass all traditional machine-learning approaches whenever enough training samples are available. In the context of pixel-wise classification, this means that images with corresponding label maps have to be provided. This requirement, however, is a major problem in the field of pixel-wise image classification, because the generation of label maps is a very time-consuming and costly task. In this project, we address this problem and aim at improving the quality of deep neural networks trained on only a limited amount of training data for new images to be classified. In particular, this shall be achieved by the development of methods for semi-supervised domain adaptation. In this scenario, two or more domains are considered which may be different but have to be related. Practically the domains could correspond to imagery from different cities or different capturing seasons. While in the source domain (e.g. data acquired in past projects) training samples are assumed to be abundant, in the target domain (a new dataset) only data samples without labels are available. The developed methods for domain adaptation use the labelled source domain samples as well as the unlabelled target domain samples to obtain an improved classifier in the target domain (see figure).

We explore several methods for deep domain adaptation, i.e. domain adaptation for deep learning. The first approach is based on representation matching [1]. Besides learning to correctly classify samples from the source domain in a supervised way, a second feature extraction network (i.e. consisting of the first layers of the architecture) is trained to produce feature vectors for target domain samples that are indistinguishable from the representations obtained for source domain samples using the source domain classification network. This is achieved by adversarial training, where a second network, called discriminator, is trained to predict from which domain a feature vector was generated. Simultaneously, the feature extraction network for target domain samples is trained to “trick” the discriminator by creating features that cannot be distinguished from features generated by the source domain network. During target domain inference, the feature extraction network for the target domain is used together with the classification layers of the source domain network. Using this approach, a stable improvement of the classification results in the target domain could be achieved. In a second approach [2], the adaptation is done by encouraging a model initially trained in a supervised way using source domain data to minimize the entropy of pixel-wise predictions for samples from the target domain. By applying several modifications to this method, a stable improvement of the classification in the target domain could be achieved. In the future, we will investigate alternative strategies and also the combination of different strategies in order to achieve an even higher stability and better performance.

Publications

[1] Wittich, D.; Rottensteiner, F. (2019): Adversarial domain adaptation for the classification of aerial images and height data using convolutional neural networks. In: ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences IV-2/W7, pp. 197–204. Weitere Informationen DOI: 10.5194/isprs-annals-IV-2-W7-197-2019

[2] Wittich, D. (2020): Deep domain adaptation by weighted entropy minimization for the classification of aerial images. In: ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences V-2, pp. 591–598.